-

Reality-Aware Networks

Funded by: NSF CNS Medium Collaborative grant awarded for Reality-Aware Networks This project seeks to improve the robustness of wireless sensing and networking technologies through a reality-aware wireless architecture that blends networking and sensing. Robust perception and high-bandwidth networking benefit innovations across a diverse spectrum of high-impact areas including mixed-reality, robotics, and automated vehicles. For example, the use of such techniques to enhance driver assistance systems or automated vehicles has the potential to save numerous lives. In addition to disseminating results through scholarly publication, the project will engage the wireless and automotive industry to facilitate the technology transfer. The project also includes a set of integrated education and broadening participation activities to engage and retain students from underrepresented groups through internship programs, educational and outreach activities at each participating institution.

-

LiftRight: Quantifying performance measures for fitness domains

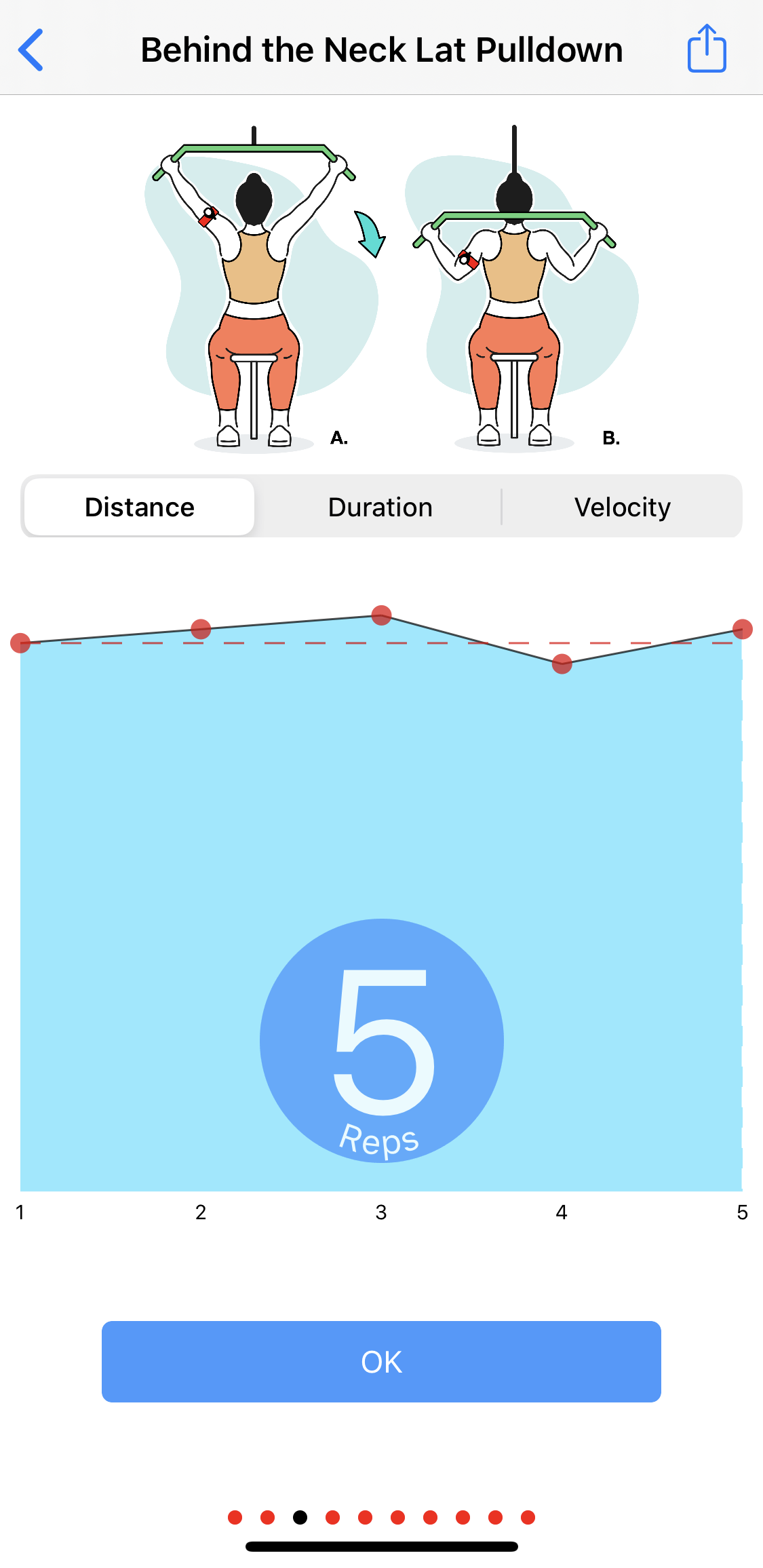

Funded by: NSF CPS Small grant awarded for Performance Monitoring Cyber-Physical System for Emerging Fitness Spaces! LiftRight is a low-cost abstraction that captures upper body dynamics and computes several performance measures accurately. We leverage an arm-mounted inertial sensor for tracking the user’s arm and computing associated kinetics. We focus on segmenting the time-series workout trace from the IMU into sets which are further divided into constituent reps. For measuring user performance, LiftRight detects the range of motion and the velocity for each lifting/lowering cycle. LiftRight also determines recovery times and identifies other qualitative attributes- such as when fatigue starts setting in. This can help participants make informed decisions regarding meeting their goals. We focus on monitoring fundamental attributes for each workout. Capturing these statistics serves as a precursor to muscle fatigue detection, injury prevention, and overall health improvement.

In the real-time version of LiftRight, we use game design to merge quantifiable performance metrics with game-like elements to encourage users to perform consistent movements. Our gamified feedback approach is an exploration in understanding user needs and analyze feedback measures. We study how to enable early detection of a movement in real-time, with partial data. To generate actionable information via early event detectionin real-world conditions we define the following design goals: scalable, translatable, unobtrusive, and real-time. We believe that the ability to accurately monitor weight training will lower the entrance barrier and help prevent injuries by helping users and trainers alike.

-

A-spiro: Toward perpetual breathing monitoring

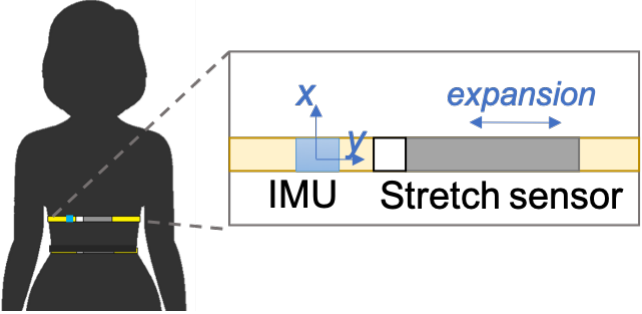

While continuous heart rate monitoring has found its way into our fitness trackers and smart watches, the optimal way to measure real-time variations in breathing is still largely an unsolved problem, albeit with potential for clinically significant impact. A-spiro a single-point wearable sensing technique to estimate respiratory flow and volume, in addition to respiratory rate. The A-spiro prototype consists of a wearable sensor that is integrated into a belt worn around the chest. We show that when coupled with an inertial measurement unit, our system can accurately measure breathing parameters, even when the user is ambulatory. A-spiro can model lung hysteresis to separately predict increasing and decreasing trends of breathing flow. Existing techniques either monitor breathing rate only, or estimate volume and flow when the user is immobile, for example, sleeping. A-spiro incorporates techniques for motion correction to provide accurate estimates even when the user is non-static.

-

Panoptes: Infrastructure Camera Control

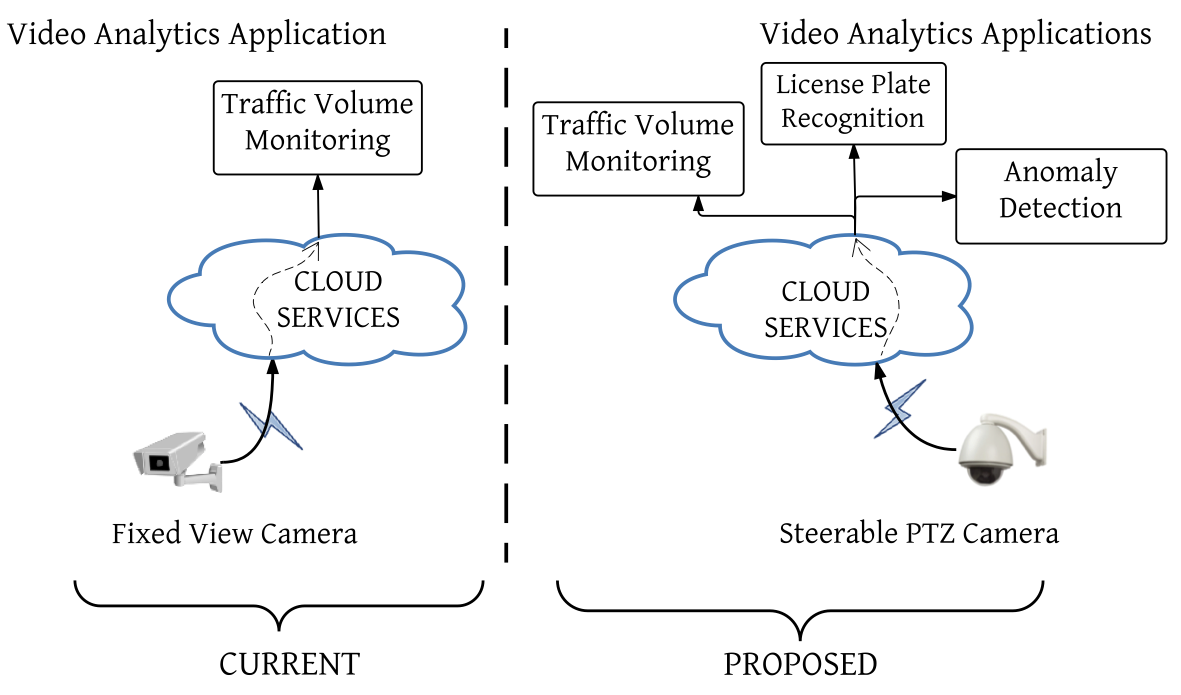

Panoptes is a novel view virtualization system for steerable infrastructure cameras. Infrastructure cameras are often installed with a specific application in mind and their view is carefully adjusted to fit this application. Supporting multiple simultaneous analytics applications on the same camera often creates challenges because their view and image requirements tend to differ. Panoptes breaks this one-to-one binding between camera and the application by leveraging the steerable nature of many of these cameras. We also designed a mobility-aware scheduling algorithm that anticipates object mobility and network/steering latency to maximize the number of simultaneous applications served, while minimizing the number of relevant events missed.

-

LookUp: Enabling Pedestrian Safety Services via Shoe Sensing

LookUp is a novel shoe sensor based approach for sub-meter location classification in noisy outdoor urban environments. Pedestrians are most likely at risk when crossing the street. LookUp estimates pedestrian risk by sensing if a pedestrian is walking safely on the sidewalk or transitioned into the street. Unlike earlier approaches of dead reckoning and step counting, it can measure the inclination of the ground a person is walking on and detect roadway features such as ramps and curbs, that precisely indicate sidewalk-street transitions. These detections can be used to warn distracted pedestrians, or oncoming vehicles of the pedestrian's presence in-street. LookUp was exhaustively evaluated in the high-clutter Manhattan environment.

-

Detection of diabetic retinopathy using smartphones

Diabetic retinopathy is one of the leading causes of blindness worldwide. We propose a low cost postable smartphone-based decision support system for initial screening of diabetic retinopathy using image analysis and machine learning techniques. We attach a smartphone to a direct handheld ophthalmoscope, where the phone captures fundus images as seen through the opthalmoscope. Through this mobile eye-examination system, we envision making the early screening of diabetic retinopathy accessible, especially where dedicated ophthalmology centers are expensive.